This week’s paper describes a newly discovered visual method used by jumping spiders to determine the depth of objects. Jumping spiders need to have good depth perception so they know how far to jump to attack their prey, which include fruit flies. Organisms use different methods to determine depth, such as binocular vision in humans and motion parallax in birds and other insects. For one of the first times, the authors describe how defocused images in the eye can be used to measure absolute distance of objects. The article by Nagata et al. appeared in a recent issue of Science.

Introduction to retinas

By Sunshineconnelly at en.wikibooks

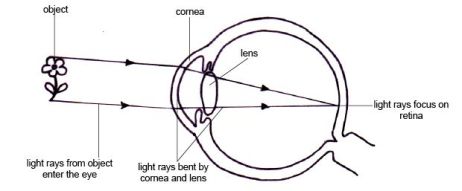

The lenses in our eyes focus incoming light onto the back of our eyes, in an area called the retina. The retina is made up of specialized cells called photoreceptors, also known as rods and cones in humans. These cells express proteins, also called photoreceptors, in their membranes that change their activity when light hits them. Photoreceptors respond to certain wavelengths of light. For instance, in human cone cells, there are receptors that are activated most efficiently by red light; there are others for green and for blue light. When the photoreceptors become activated by light, they send this signal on to other cells in the retina and eventually to the brain, which interprets this as a visual image.

The light that hits our retinas needs to be focused on the retina in order for us to perceive a sharp image. In people who are near or far-sighted, their eyeballs are the wrong shape, so the light that hits the retina is not precisely in focus. Imagine there’s a pinpoint of light in front of you, if that activates a small precise area on the retina, it will be perceived as a small pinpoint. If the light is not focused properly onto the retina, more photoreceptors will be activated and it will be perceived as a bigger, blurry smear of light.

A near-sighted eye will focus the image in front of the retina. Corrective lenses (glasses) can make it so the image will be correctly focused onto the retina. Image from glassian.org.

A near-sighted eye will focus the image in front of the retina. Corrective lenses (glasses) can make it so the image will be correctly focused onto the retina. Image from glassian.org.

Depth Perception

There are a number of ways that animals perceive depth in a field of view, but I will just mention the two main ones.

1) Binocular vision: In animals with two eyes that have overlapping fields of view (like us), the brain can calculate depth by comparing the angles of the light hitting both eyes.

2) Motion parallax: If you move your eyes while looking at a visual scene, objects that are closer will appear to move more than objects that are further. This works in humans, though it’s not our main way of determining depth. For many organisms which have eyes on the side of their head, like birds and insects, this is how they perceive depth.

In this article about jumping spiders, binocular vision was ruled out, because when all of the spiders’ eyes were covered except for one, the spiders could still easily jump accurately onto their prey. Motion parallax was also ruled out because jumping spiders do not move their heads before they jump. The authors asked what then could be responsible for the excellent depth perception of the spiders.

Spidey vision

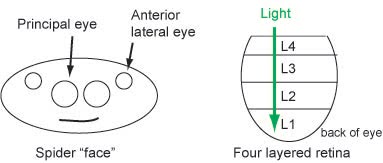

Jumping spiders have four pairs of eyes (eight total eyes). In the front of their head, they have two anterior lateral eyes (on the side) and two principal eyes. The principal eyes are larger and are used for depth perception. The retinas in the back of the principal eyes have 4 layers, L1-L4.

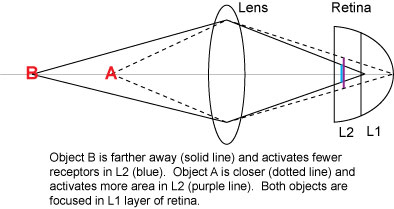

Nagata et al. demonstrate that retina layers L1 and L2 have photoreceptor proteins that are most sensitive to green, and considerably less sensitive to red light. Layers L3 and L4 have receptors that are sensitive to UV light. Among layers L1 and L2, the first layer is at such a position to allow for light to be fully focused onto it. Layer 2 is closer to the lens, so unfocused light will hit it on the way to L1. This fact gives the first clue as to how spiders can detect depth.

Imagine there’s an object A closer to the spider than object B. Because of the way that lenses bend light, the image of the closer object A will activate a larger area in layer L2 of the retina, whereas object B will activate less receptors in L1 (see figure below, adapted from Nagata et al., Figure 1D).

The number of activated receptors in L2 could send a signal to the brain about how far away an object is. The bigger the area of activation in L2, the closer the object. In other words, the images will all be focused in layer 1, so that will produce a visual representation of the real object, but the “defocus” signal in layer 2 of the retina will encode information about depth of field.

Testing the theory

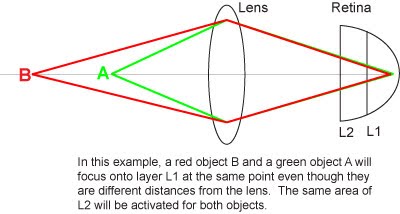

Different colors of light have different wavelengths and therefore have different focal distances. In other words, different colors will focus through a lens at different distances. A pure red light and a pure green light will focus onto the same spot of the retina only if the red visual image is further away from the lens than the green (figure below adapted from Figure 3A).

Remember that the receptors in the L1 and L2 layers of the retina are most sensitive to green light. When the spider is in natural light, it probably responds best to the portion of light that is in the green spectrum. The brain has learned that a certain amount of defocus activation in L2 under green light illumination (which would naturally be present in sunlight), translates to a certain distance away from the spider. The authors tested this by putting a spider in a container with a few moving fruit flies. Then they changed the illumination to pure green light coming from LEDs. The spider was still able to judge distance accurately and could catch the fruit flies without a problem.

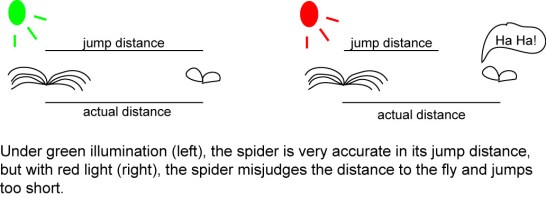

Next, the authors changed the illumination of the chamber to pure red light. Let’s predict what will happen in this case. Although the receptors in L1 and L2 prefer green light, red light at a high enough intensity will still activate the photoreceptor cells the same amount (the authors did controls to test this). The spiders are used to getting depth information from green light, though. When a certain number of photoreceptors in L2 are activated, the brain knows that this is a certain distance under green light. A object lit up with red light that is farther away would activate the same number of photoreceptors. However, the brain doesn’t know that this is red light now and doesn’t know about different focal distances of light. Therefore when x number of photoreceptors are activated, the brain will think the red object is a closer green object. One would predict that the spiders would jump inaccurately to shorter distances with red light.

The results of the experiment under red illumination prove that this model is correct. The spiders consistently jumped too short and often missed the fruit flies altogether.

Conclusion

This is one of the first examples of an organism using “defocus” to determine depth. The layout of the two green-sensitive retinas allows for the spiders to use one retina to create an accurate representation of the object and the other retina to determine depth. The authors suggest that this information from the spider could be used to inspire developments in artificial computer vision. I like the idea of technology learning from biology which developed through years of evolution.